[Deep Dive] Gastown

The Four Architectural Decisions That Make Actually Work. Here is the technical breakdown of why Gastown can sustain 20-30 autonomous agents working for days without human intervention.

We’ve all seen the demos where five AI agents “collaborate” to build a snake game in 30 seconds. But when you try to apply that standard orchestrator-worker model to a massive, real-world codebase, it breaks. The orchestrator gets confused, context windows overflow, state files corrupt, and the whole hive mind grinds to a halt.

Much like with OpenClaw, we just went through the Gastown repository to understand how it solves the multi-agent scaling problem. It turns out, it doesn’t just prompt better -- it fundamentally rewires how agents relate to work, memory, and failure.

[Deep Dive] OpenClaw

Most “agentic” frameworks are just API wrappers with a tool loop. Here is the technical breakdown of why OpenClaw is an actual execution environment.

Here are the four genuine innovations that enable Gastown to run autonomous multi-agent systems reliably.

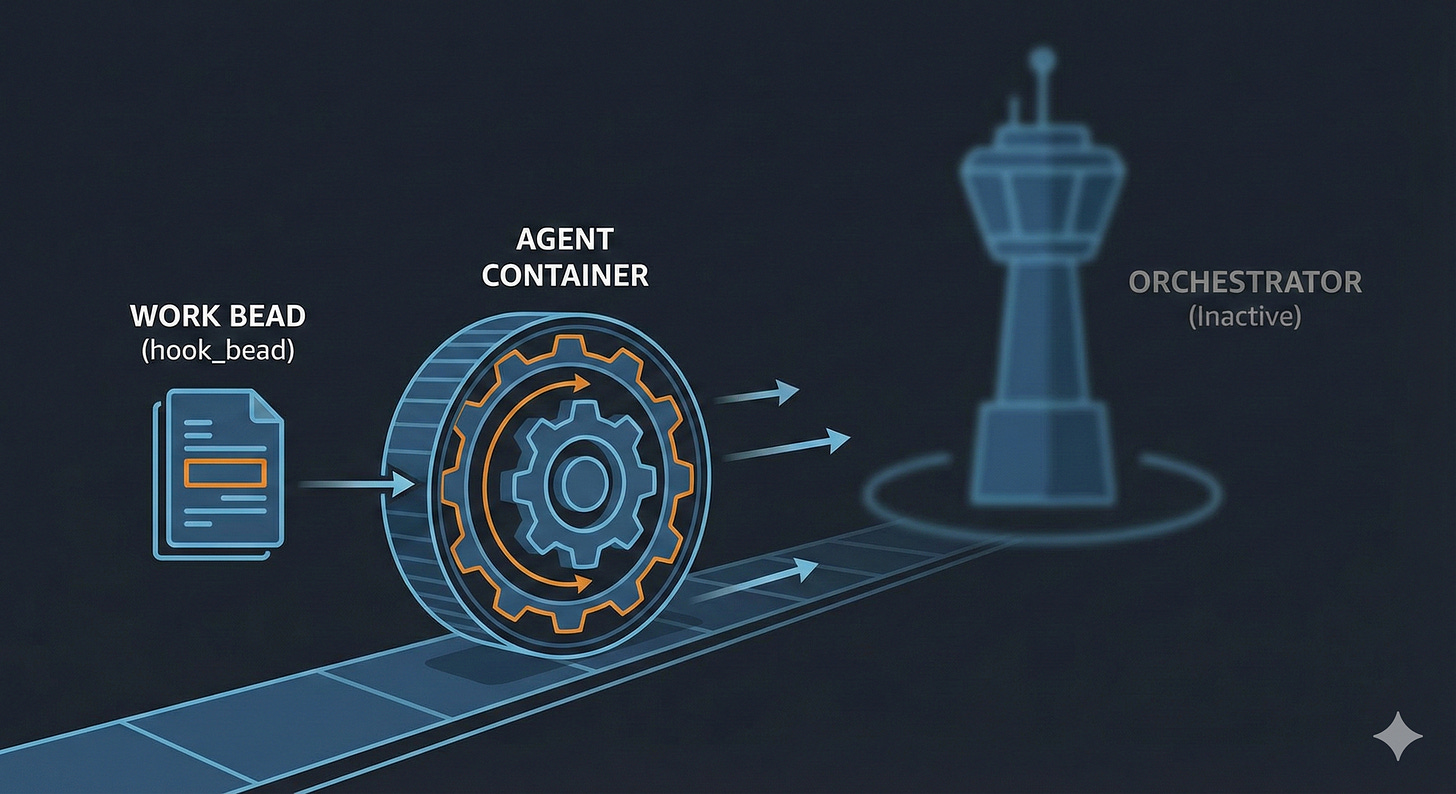

1. Work Propels Itself

In a traditional setup, you have an Orchestrator. The orchestrator polls for work, assigns it to an agent, monitors for completion, handles failures, and reassigns tasks. The orchestrator is a massive bottleneck and a single point of failure.

Gastown inverts this architecture: work is attached directly to the agents and executes itself.

Work is written to an agent’s hook_bead. When a session starts, that hook fires automatically, and the agent begins executing the work. There is no orchestrator bottleneck constantly managing the loop.

Why this matters: If an agent crashes mid-work, the system doesn’t need an orchestrator to figure out what went wrong. The hook is still set. The next time the session starts, the hook fires, and the work continues. Zero human intervention. The work itself is the propulsion mechanism.

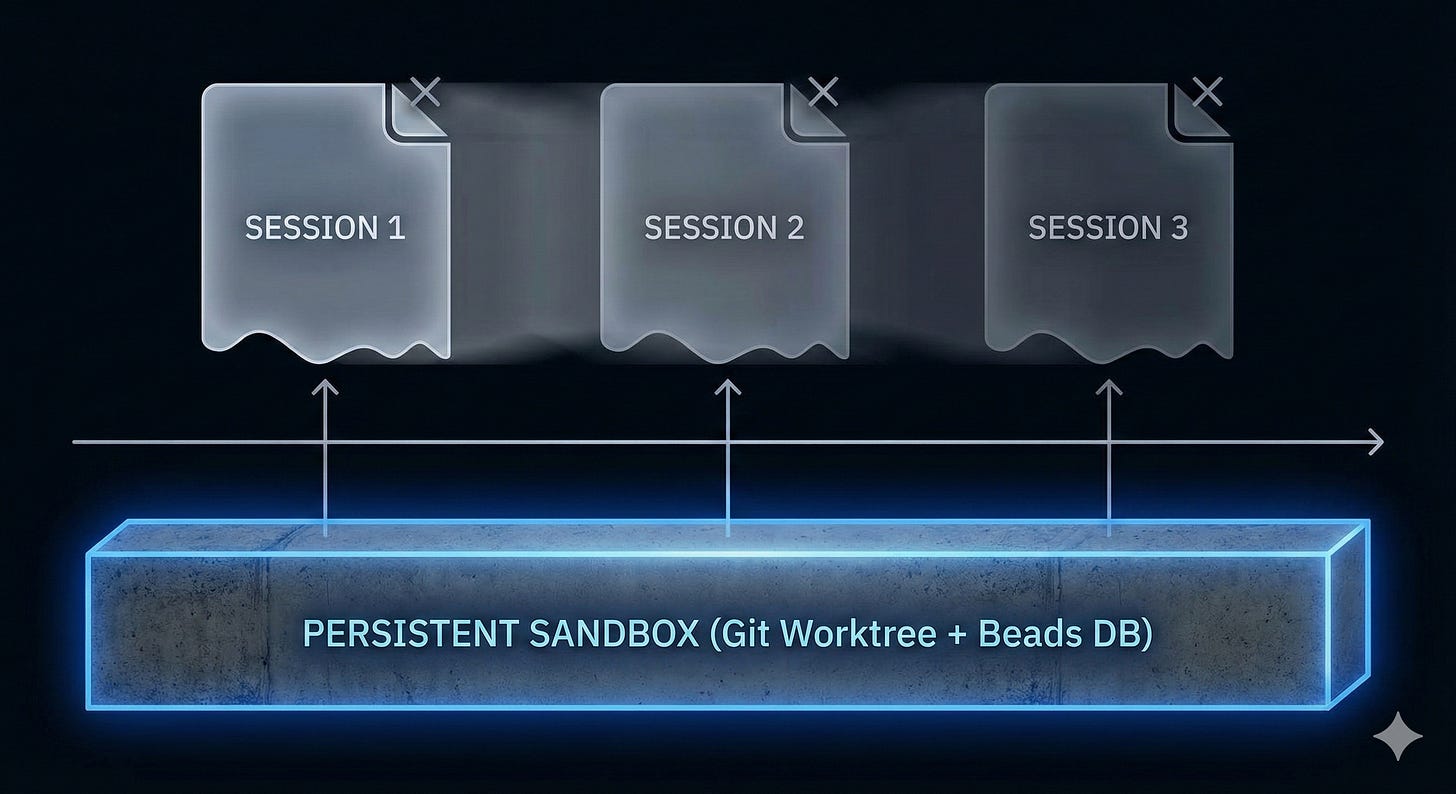

2. Sessions Die, Sandboxes Live

The most profound realization in Gastown is that agent identity and work state must be completely decoupled from the LLM session (the context window).

Standard frameworks equate the agent’s session with the agent’s state. When the context window fills up, you either lose history or pay exorbitant API costs for context management. When the session dies, the work dies.

Gastown treats the session as disposable. Instead, the Sandbox (the git worktree, the branch, and the “beads”) is what persists.

You can kill a Gastown session at any time. When a new session spins up, it inherits the full state via git and the execution hook.

Why this matters: Context windows are finite resources. By treating them as expendable and externalizing context to

git, an agent can work for days across hundreds of distinct sessions without losing its place.

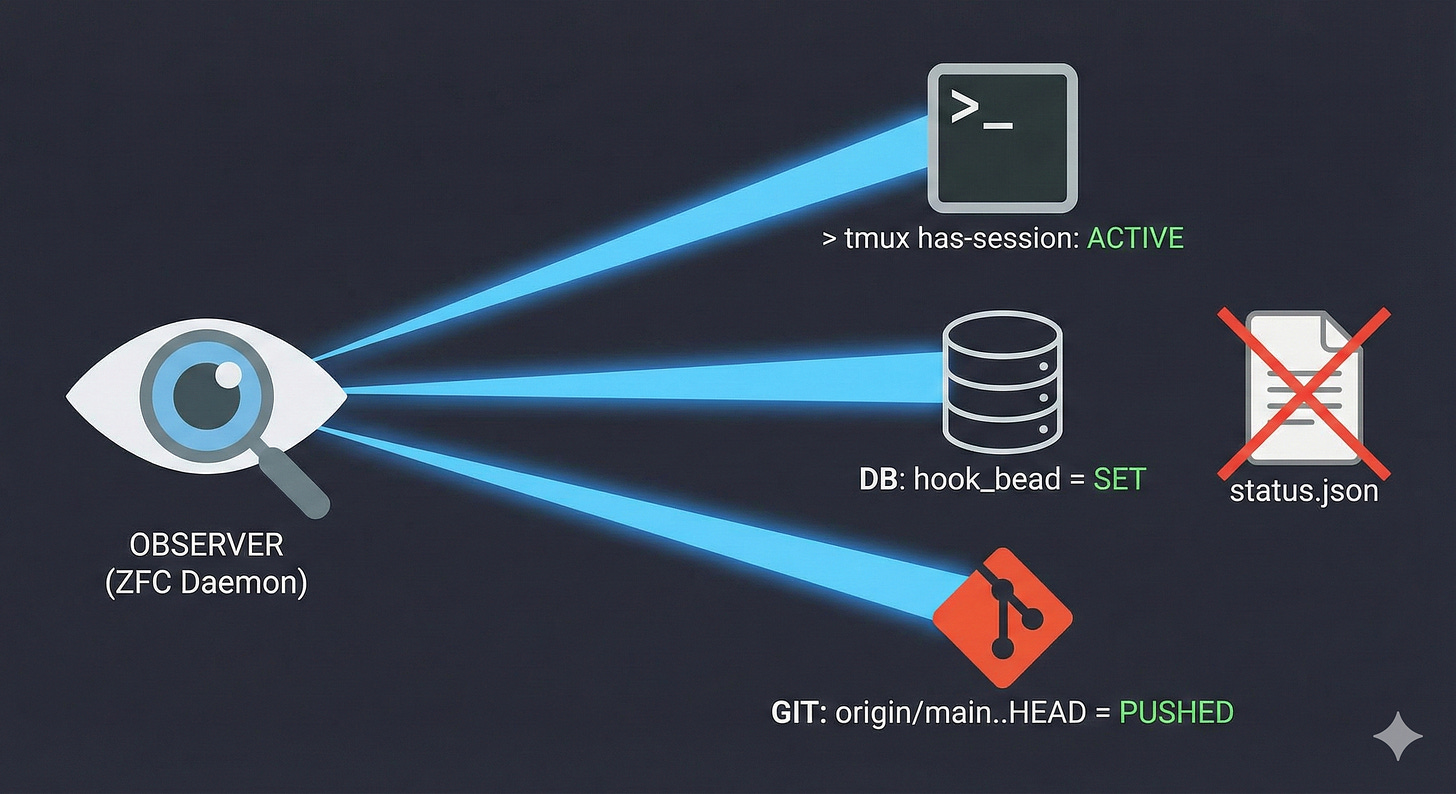

3. Observable State, Not Stored State

Gastown operates on a principle called ZFC (Zero-File-Config). It doesn’t track state in files; it observes reality directly.

In traditional systems, a state file might say an agent is running. But what if the process crashed between a write and a flush? The state file is now lying, the system gets confused, and a human has to SSH in to fix it.

Gastown asks the environment for the truth:

Is the session alive? It checks

tmux has-session -t name.What is the current work? It queries

SELECT hook_bead FROM agents.Are changes pushed? It runs

git log origin/branch..HEAD.

Why this matters: State files corrupt. Reality does not. By observing actual system processes (tmux, git, databases) instead of relying on tracked state, Gastown eliminates an entire class of consistency bugs. Recovery is always possible because the truth is always discoverable.

4. AI in the Patrol Loop

How do you know if an agent is stuck? A standard script uses a mechanical health check: no output for 5 minutes = dead process. But what if the agent is just thinking through a complex architectural problem? You get a false positive and kill productive work.

Gastown solves this by putting AI in the patrol loop. “The Witness” isn’t a bash script; it’s an AI agent that makes judgment calls about other agents based on a hierarchy of graduated intelligence:

Daemon: Mechanical. A 3-minute heartbeat check that detects obvious process failures.

Deacon: AI. Handles town-wide triage and cross-rig orchestration issues.

Witness: AI. Per-rig intelligence that reads a worker’s recent output, understands the context, and decides: “still thinking,” “actually stuck,” or “needs help.”

Why this matters: Mechanical rules cannot understand nuance. An AI watching an AI can reason about whether silence is productive or problematic. This prevents false positives while still catching genuine cognitive loops or failures.

The Synthesis: Why These Four Together?

Gastown achieves autonomous multi-agent coordination not through overwhelming complexity, but through four simple ideas applied consistently. Remove one, and the whole system degrades:

Self-propelling work means there is no orchestrator bottleneck.

Ephemeral sessions mean context limits don’t break the workflow.

Observable state means crashes don’t permanently corrupt the system.

AI patrol means intelligent recovery happens without false positives.

When you combine them, you get 20 to 30 agents working on a shared codebase, automatically cycling through sessions, and self-healing from inevitable failures -- for as long as there is work to do.

So, that’s the underlying architecture that keeps Gastown running when other frameworks crash.

![[Deep Dive] OpenClaw](https://substackcdn.com/image/fetch/$s_!SX1V!,w_280,h_280,c_fill,f_auto,q_auto:good,fl_progressive:steep,g_auto/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F59aaedf7-75d3-42d8-b873-ebf596ed4c05_2752x1536.png)