[Deep Dive] OpenClaw

Beyond the Wrapper: The Architectural Decisions That Make OpenClaw Actually Work

Most “agentic” frameworks are just API wrappers with a tool loop. Here is the technical breakdown of why OpenClaw is an actual execution environment.

Look, we need to be honest about the current state of AI agents. Most of them are demos that look incredible in a 30-second video but fall apart the moment they hit a real-world edge case. They are usually just a static system prompt, a list of tools, and a while(true) loop that crashes the first time an API times out.

I recently pulled down the OpenClaw repository to figure out why it feels different - why it seems to handle long-horizon, architectural tasks without getting confused or stuck.

The answer isn’t “better prompting.” It’s better engineering. OpenClaw isn’t an agent; it’s a composition engine. It treats the agent not as a chatbot, but as a persistent entity that is “situated” in a specific context.

Here are the specific architectural decisions that allow it to function autonomously.

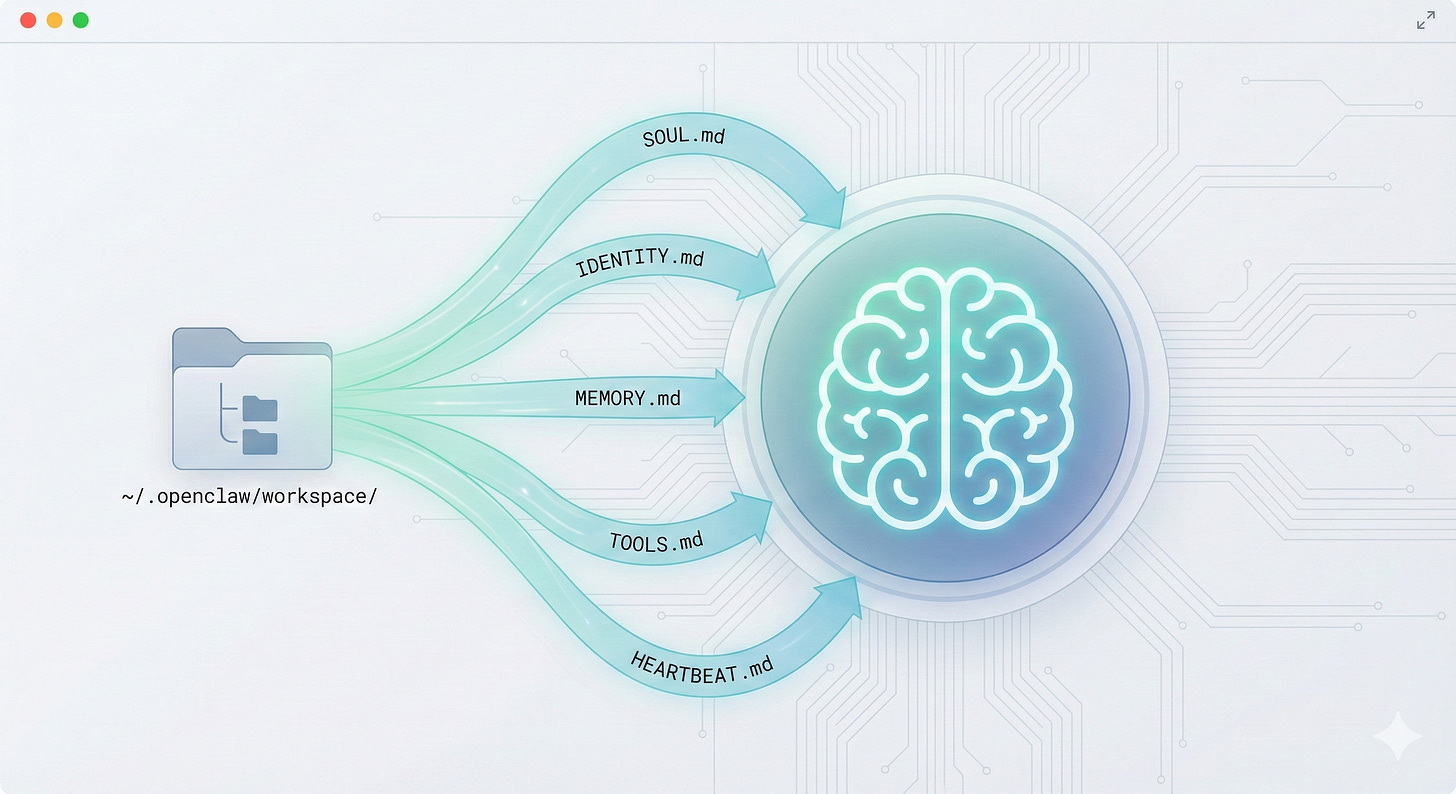

1. Identity is Rooted in the Workspace

Standard agents are amnesiacs. Every time you spin one up, you have to re-explain the rules. OpenClaw solves this by injecting a dynamic “soul” into the system prompt at runtime, pulled directly from the file system.

Before every single turn, the runtime constructs the agent’s context by reading a specific file structure:

SOUL.md: The core personality and communication style.IDENTITY.md: The agent’s specific purpose.MEMORY.md: Long-term facts and accumulated knowledge.HEARTBEAT.md: Scheduled, proactive behaviors.

Why this matters:

This creates persistence. If you defined your project constraints in MEMORY.md three days ago, the agent reads them today before it answers you. It is not starting fresh; it is resuming existence.

2. “Skills” Are Not Just “Tools”

In most frameworks, a tool is just a JSON function signature. If the agent tries to call git but git isn’t installed, the agent crashes or hallucinates a result.

In OpenClaw, Skills are complete knowledge packages. They contain metadata about eligibility.

A skill in OpenClaw defines:

Dependencies: Does the user have this binary?

OS Compatibility: Is this Linux-only?

Installation: How do I install this if it’s missing?

The Insight: The runtime filters tools before the agent sees them. If a prerequisite is missing, the tool is invisible. This eliminates the “I tried to run this command but it failed” loop that plagues other agents.

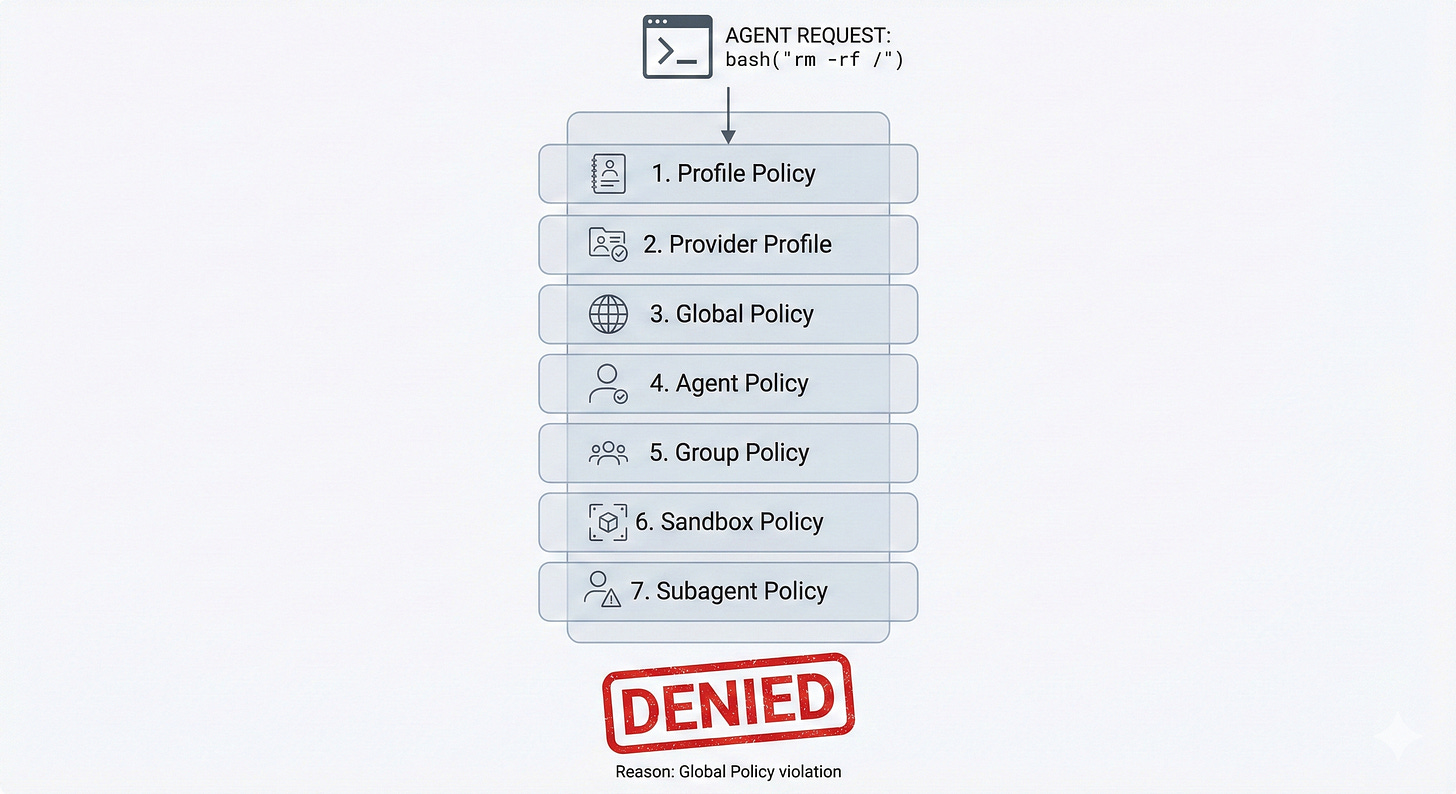

3. The Seven-Layer Policy Stack

How do you let an agent write code without letting it delete your hard drive? Most systems use a binary “allow/deny” switch. OpenClaw uses a context-aware policy stack that resolves permissions per-invocation.

When an agent tries to use a tool, the request passes through seven layers:

Profile: Does this auth profile allow it?

Provider: Does the model provider support it?

Global: Is it globally enabled?

Agent: Does this specific agent have access?

Group: Are we in a secure channel (DM) or public (Slack #general)?

Sandbox: What are the filesystem limits?

Subagent: If this is a child agent, what did the parent allow?

This allows for nuanced behavior. Your “DevOps” agent might have kubectl access in a private terminal session, but if you speak to it in a public Discord channel, the Group Policy layer strips that tool away automatically.

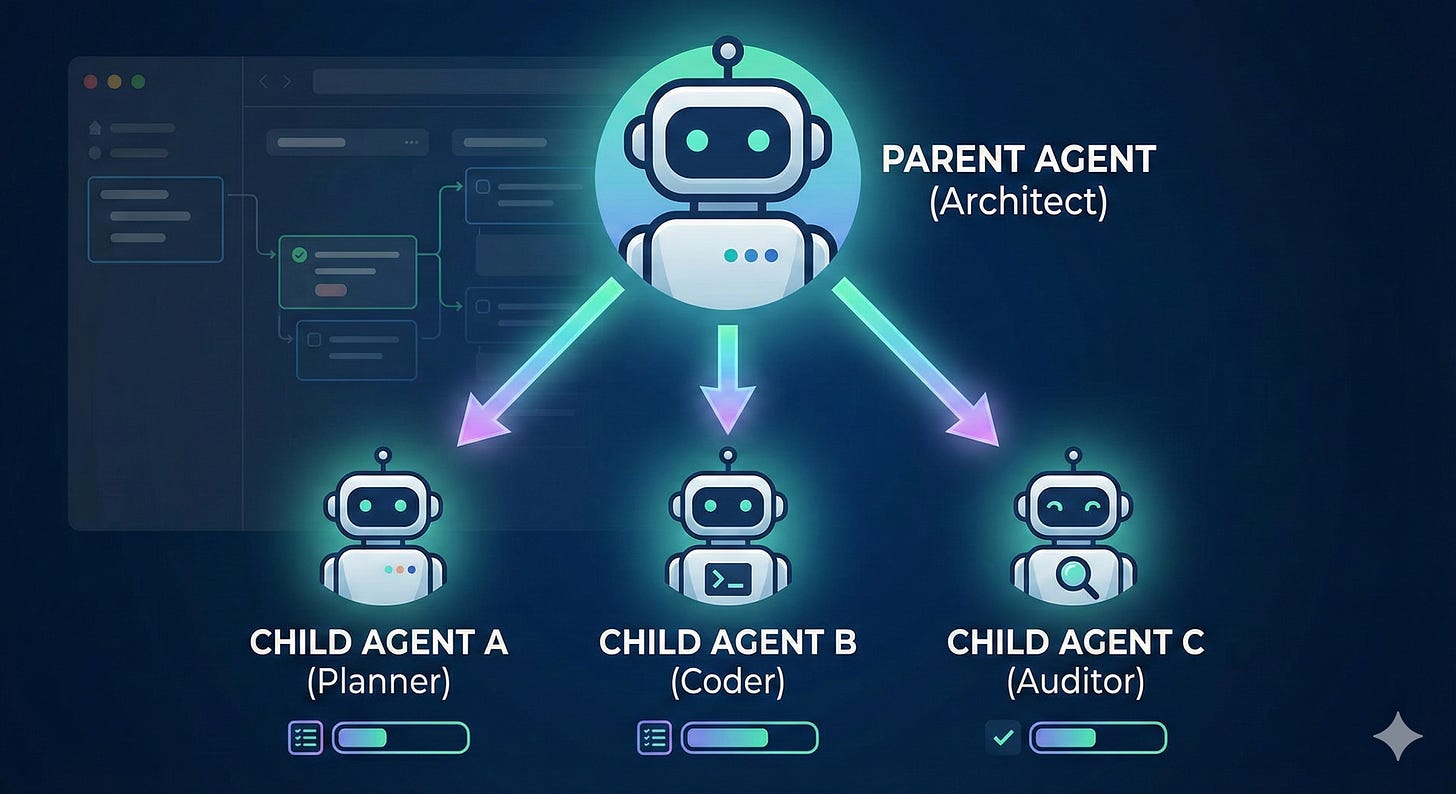

4. Recursive Spawning (The “Architect” Feature)

This is the feature that enables true autonomous workflows. OpenClaw agents have a tool called sessions_spawn.

This allows a parent agent to decompose a complex objective into sub-tasks and spawn specialized child agents to handle them.

Parent: “I need to refactor the auth system.”

Action: Spawns

Agent A(High thinking capability) to plan the architecture.Action: Spawns

Agent B(Coding specialist) to write the tests.Action: Spawns

Agent C(Security auditor) to review the code.

These run in parallel. The parent acts as the orchestrator, synthesizing the results. This is how you get “architectural” behavior - by mimicking an engineering team rather than a lone developer.

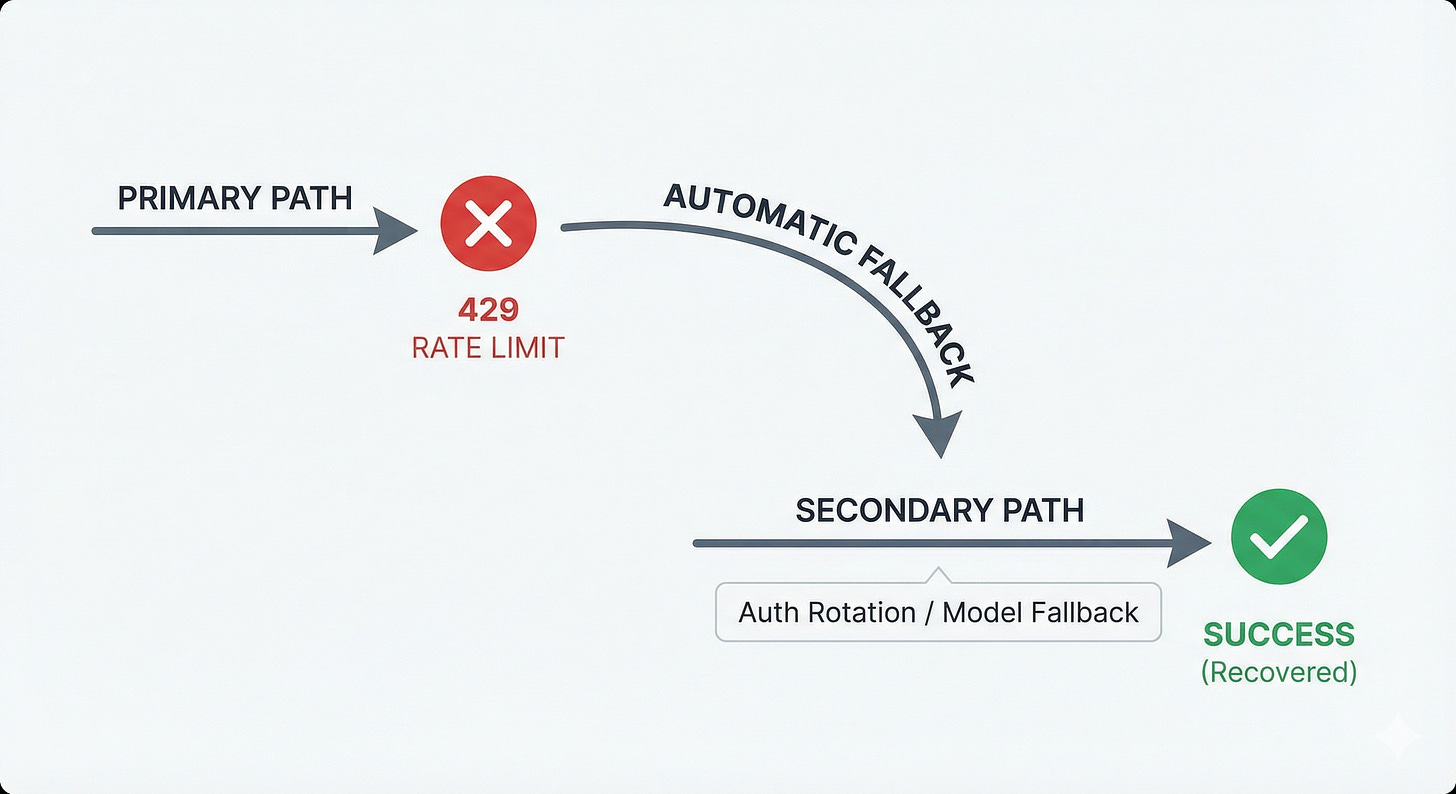

5. Graceful Degradation is Mandatory

To run autonomously for hours, you cannot crash on a network blip. OpenClaw assumes failure is inevitable and builds fallback chains at every layer:

Auth Rotation: If a key hits a rate limit, it rotates to the next credential.

Model Fallback: If the “High” thinking model is down or limited, it automatically downgrades to a “Medium” model to keep the task moving.

Context Compaction: If the context window fills up, it doesn’t just truncate the end. It compacts the history, preserving tool results while summarizing the chatter.

The Bottom Line: Situated Agency

The reason OpenClaw feels “smart” isn’t because the LLM is better. It’s because the environment is richer.

It operates on the principle of Situated Agency. The agent understands who it is (Identity), where it is (Context), what it can physically do (Eligible Skills), and what it is allowed to do (Policy).

When you combine those factors, you stop getting a chatbot, and you start getting a system capable of independent work.

See a more visual presentation here

This is really well written, Stanislav. Think I might need to go buy a Mac mini to get testing. Enough time on the sidelines with OpenClaw!